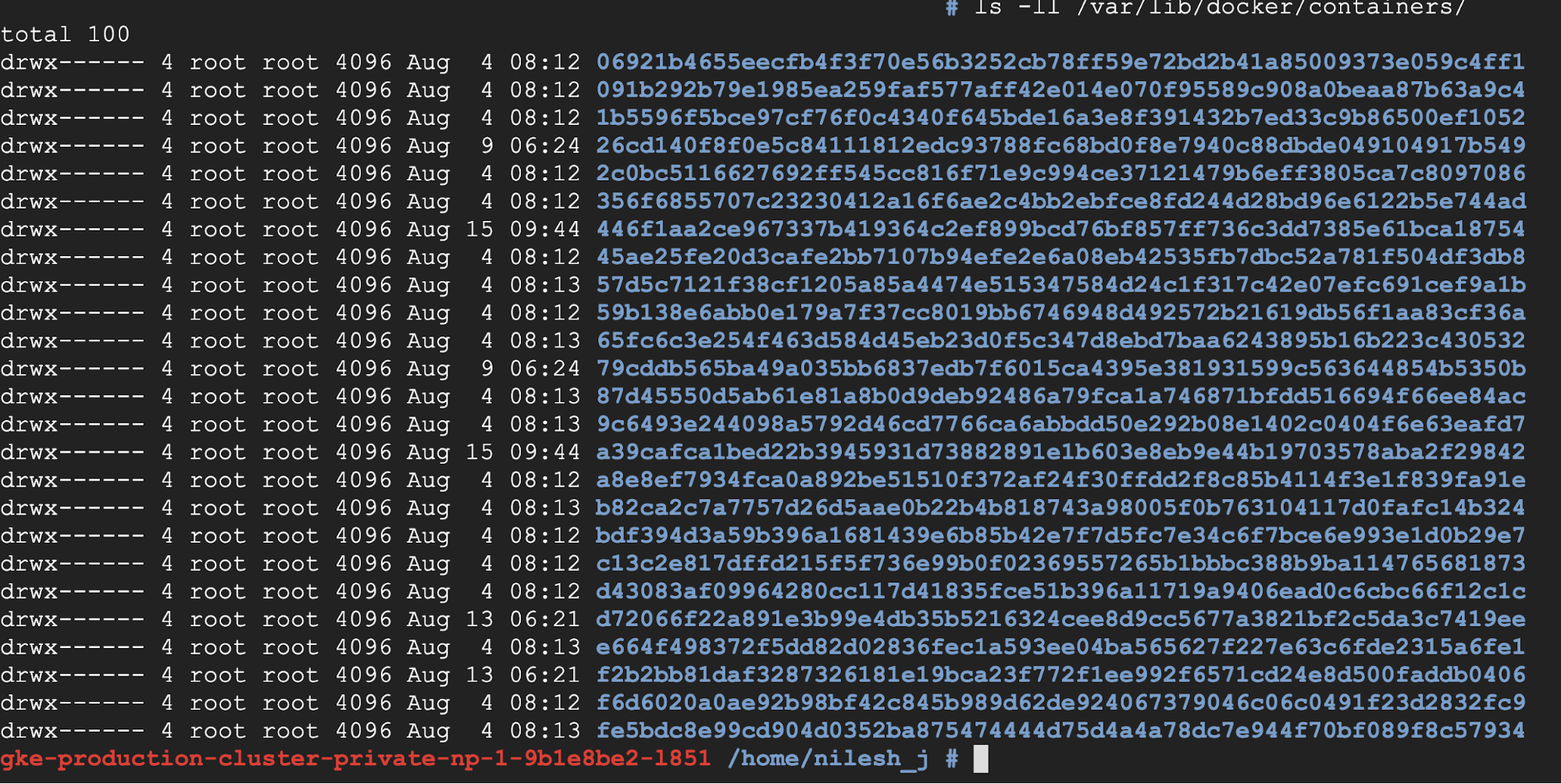

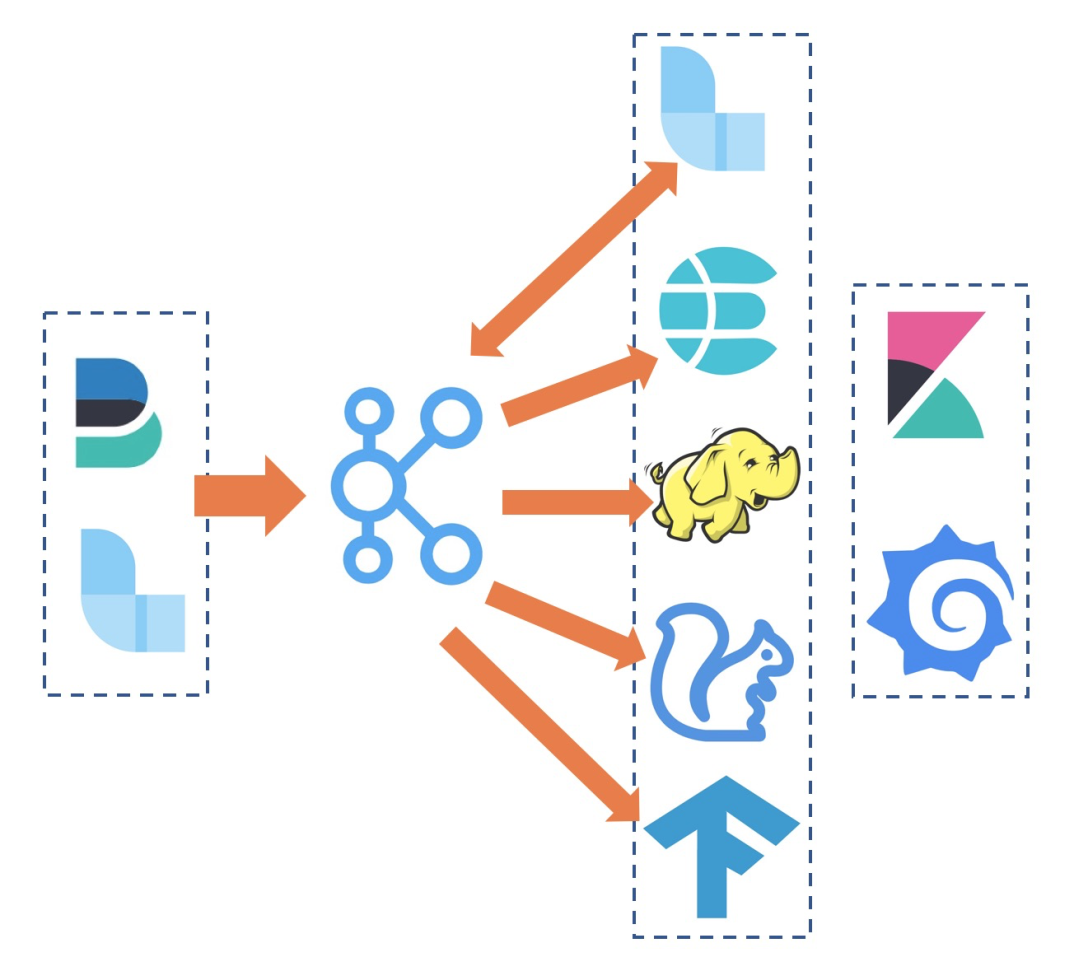

See the Elasticsearch providers guide for more details. Those of you familiar with Airflow may be aware of the bloat accumulated. If your cluster forwards logs to Elasticsearch, you can configure Airflow to retrieve task logs from it. Log.info("run date is : " + str(run_date))į. Earlier on, we had little automation around the maintenance of our Airflow cluster. LOGGER = logging.getLogger("airflow.task") Kubernetes Kubernetes Apache Airflow aims to be a very Kubernetes-friendly project, and many users run Airflow from within a Kubernetes cluster in order to take advantage of the increased stability and autoscaling options that Kubernetes provides. If your cluster forwards logs to Elasticsearch, you can configure Airflow to retrieve task logs from it. The Parameters reference section lists the. The command deploys Airflow on the Kubernetes cluster in the default configuration. 2 In this article we have outlined how you can set up remote S3 logging when using KubernetesExecutor, without creating complex infrastructure. Log: logging.log = logging.getLogger("airflow") To install this chart using Helm 3, run the following commands: helm repo add apache-airflow helm upgrade -install airflow apache-airflow/airflow -namespace airflow -create-namespace. Let's start by definining some variables that we are going to need for defining our infrastructure.Ĭreate a file called terraform/variables.Logging_config_class = log_config.LOGGING_CONFIGĮxtra_logger_names = connexion,sqlalchemyĬolored_log_format = įrom import PythonOperatorįrom kubernetes.client import models as k8s It is alerted when pods start, run, end, and fail. In a previous blog post I presented a method to deploy infrastructure with Terraformīy using GitHub Actions and a remote backend, but to keep this tutorial simple we will simply use a local backend here. A Kubernetes watcher is a thread that can subscribe to every change that occurs in Kubernetes database. Airflow Log Integration with Fluent Bit + ELK Stack (Kubernetes) High Level Architecture. Many AWS customers choose to run Airflow on containerized. It is designed to be extensible, and it’s compatible with several services like Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Elastic Container Service (Amazon ECS), and Amazon EC2. Let's get started! Deploying AKS using Terraformīefore we can install Airflow on a Kubernetes cluster, we should first create the cluster. The KubernetesPodOperator can be considered a substitute for a Kubernetes object spec definition that is able to be run in the Airflow scheduler in the DAG context. Apache Airflow is an open-source distributed workflow management platform for authoring, scheduling, and monitoring multi-stage workflows. AWS for FluentBit is employed for logging. You have kubectl installed with az aks install-cli. Kubernetes Persistent Volume Claim for mounting Airflow DAGs for Airflow pods.In this tutorial, we will deploy an AKS cluster using Terraform and install Airflow on the AKS clusterĪll code used in this tutorial can be found on GitHub:īefore we start this tutorial, make sure the following prerequisites have been met:

Running Apache Airflow on Kubernetes is a powerful way to manage and orchestrate your workloads, but it can also be a bit daunting

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed